The Hidden Benefit of MCP: Extreme Departmental Agility

MCP eliminates integration sprawl and technical debt - but the real unlock is organisational agility: every department can adopt the best AI tool without waiting on IT, while still running on one governed knowledge base.

The rush to adopt AI creates a predictable failure mode: teams plug models into everything as fast as possible, then wake up with a tangled web of fragile connectors.

Every new agent, assistant, or workflow wants access to the same things - policies, procedures, customer history, operational standards, product documentation. So teams wire direct API connections from each tool to each knowledge source.

It works - until it doesn’t.

MCP’s obvious benefit is faster integration. Its most valuable benefit is avoiding technical debt - preventing “spaghetti architecture” by decoupling your knowledge base from the tools consuming it.

We’ve been rolling out Model Context Protocol (MCP) architectures across large-scale enterprises to solve this exact problem. Think of MCP as a universal USB-C port for your company’s data: one standard interface that makes your proprietary knowledge accessible across AI tools without custom connector sprawl.

But there’s a second, less-discussed benefit that changes the speed of the business.

The Hidden Benefit: Extreme Departmental Agility

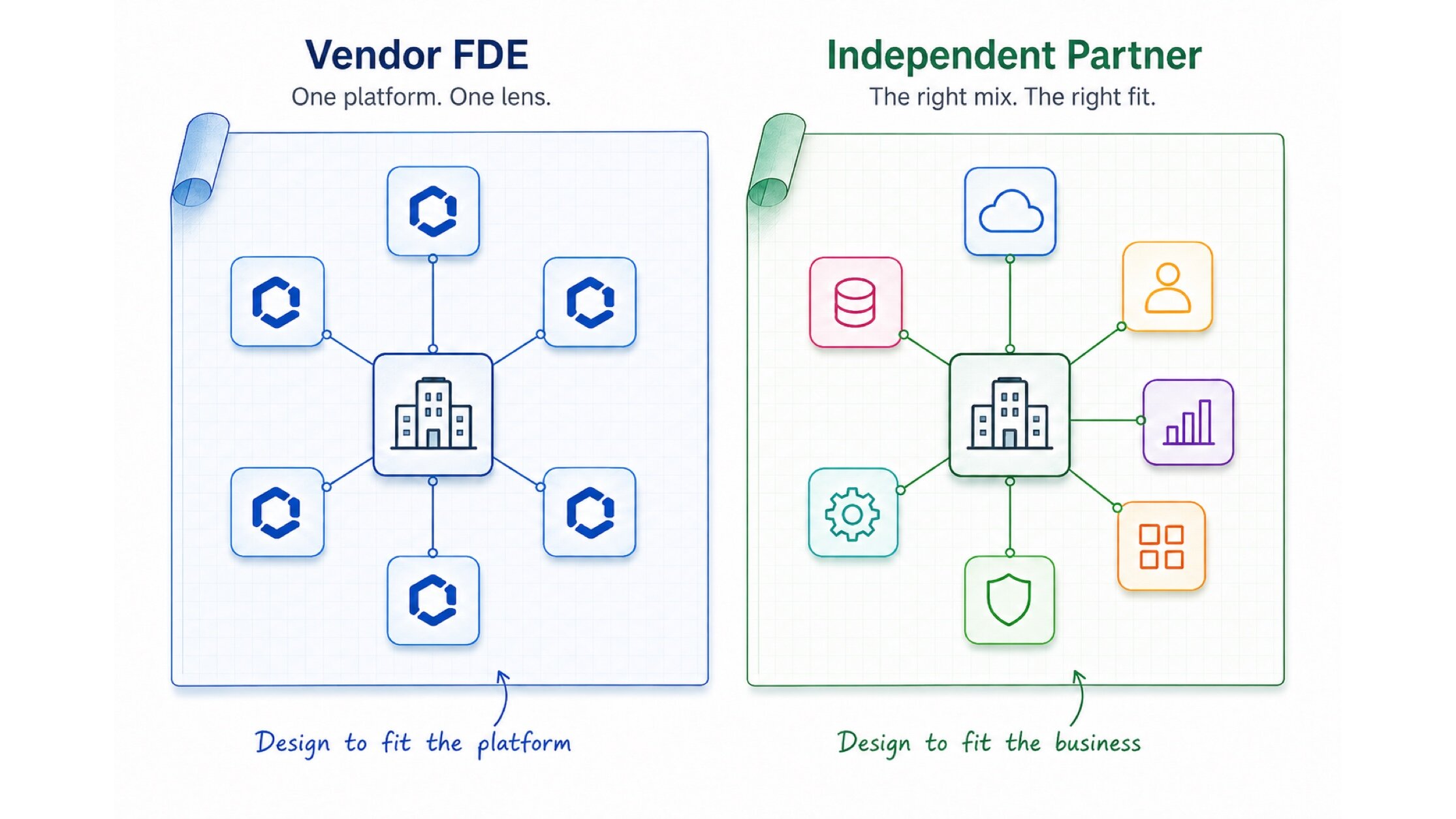

When your corporate knowledge base is accessible via MCP, every team can choose the AI tools that fit their work - and still pull from the same curated, governed organisational context.

Instead of “Which tool integrates with our stack?”, the question becomes “Which tool is best for this team this quarter?”

This changes the operating model:

- Departments adopt best-in-class tools without waiting for IT to build connectors

- Tools remain modular and swappable as vendors shift and capabilities evolve

- Governance stays centralised because the knowledge base stays centralised

Decoupling the Intelligence Layer

MCP separates the “knowledge foundation” from the “intelligence layer.” Your data stays stable and governed, while models and tools can change as often as the market demands.

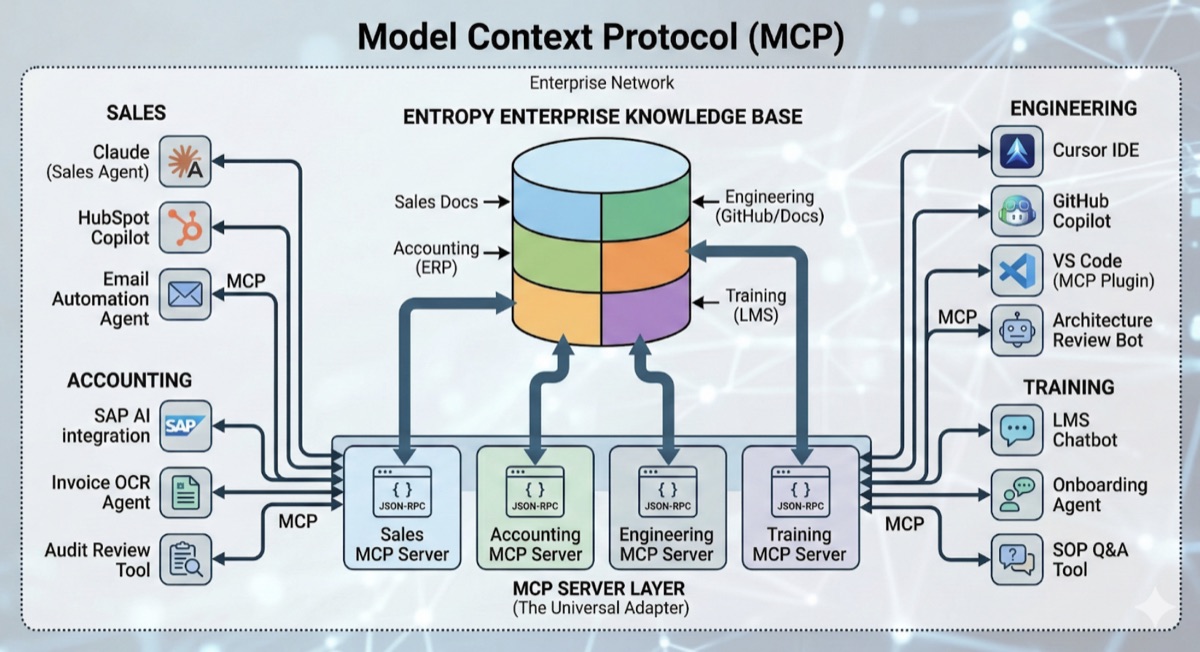

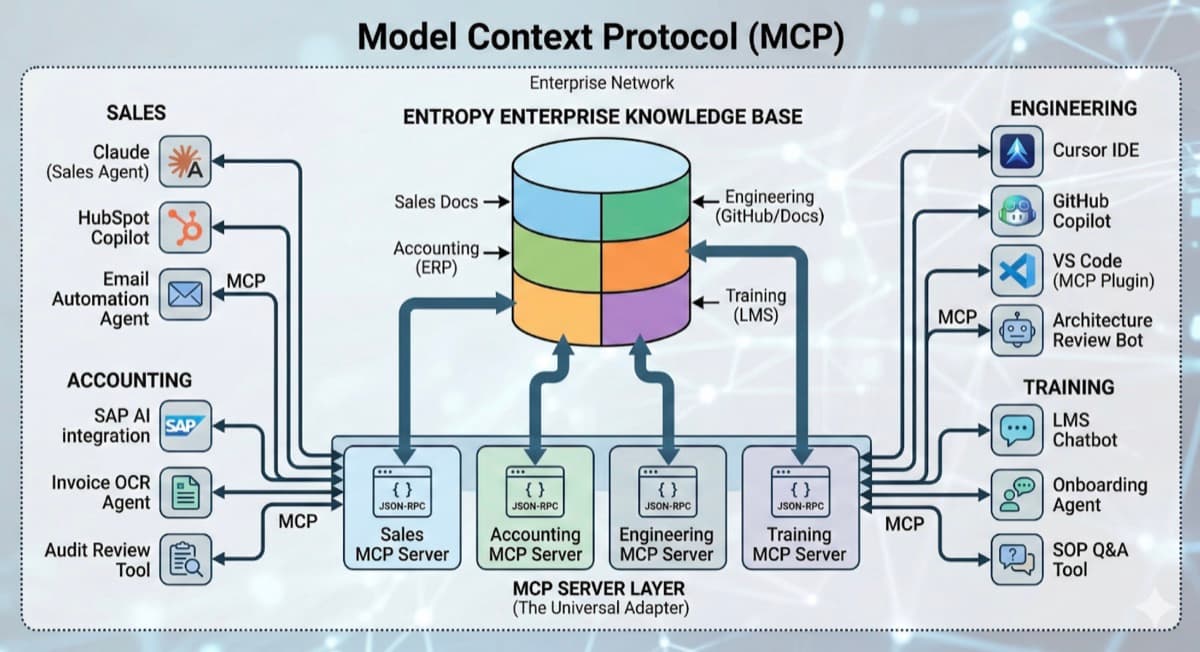

In practice, it looks like this:

- Sales can run Claude-based outreach and account intelligence agents

- Engineering can live in Cursor or VS Code for rapid shipping

- Accounting can use specialised OCR and audit tooling for compliance workflows

Because these tools are MCP-compliant, they pull from the same curated, segmented corporate data store. You don’t wait for a vendor to ship a one-off integration. If the tool supports MCP, it just works with your company’s live intelligence.

This is how enterprises stay at the cutting edge without turning IT into a permanent integration factory.

The Architecture: Hub and Spoke, Not Point-to-Point

At the core is a governed knowledge base - curated, segmented, and permissioned - exposed through MCP servers that represent distinct data “segments” (by department, domain, sensitivity, or system boundary).

The result is a hub-and-spoke model:

- The hub is your corporate knowledge foundation (vector stores, document systems, databases, SaaS tools)

- The spokes are MCP-compliant tools (agents, IDE copilots, workflow automation platforms, support assistants)

This keeps your proprietary data secure and managed in one place, while your AI tools remain modular and replaceable.

What MCP Fixes in the Real World

1) Eliminating Integration Sprawl

Without MCP, every new tool becomes another set of custom API glue. Over time, you’re maintaining dozens of brittle integrations - each with its own auth, schema quirks, rate limits, and outage modes.

With MCP, you build a single MCP server per data segment and stop building point-to-point connections.

Integration Sprawl Is Technical Debt by Default

When every tool integrates directly with every system, you don’t have an architecture - you have a patchwork. MCP collapses this into an intentional interface boundary.

2) Solving Semantic Debt

A common reason AI fails in enterprises isn’t model capability - it’s meaning.

In one system, an “Account” is a client. In another, an “Account” is a ledger entry. In one team, “Churn” means cancellation. In another, it means downgrade.

This is semantic debt: the organisation has shared words with different meanings, and generic RAG setups don’t reliably resolve the nuance.

MCP helps because tools can expose structured metadata, clear tool descriptions, and domain-specific context so the AI consistently understands what it’s looking at and how to use it.

Less hallucination isn’t about bigger models. It’s about better context boundaries and clearer semantics.

3) Real-Time Context vs Stale RAG

Traditional RAG often relies on periodic indexing. Even a strong pipeline still produces snapshots: “what the world looked like an hour ago.”

MCP supports live, query-based access patterns. Instead of retrieving cached chunks from an index, the AI can query the live source of truth when needed - with permissions and guardrails enforced at the boundary.

This is the difference between “AI that knows” and “AI that checks.”

Design Principle

Index what should be searchable. Query live what must be correct.

The Strategic Outcome

MCP isn’t just an AI integration strategy. It’s an operating model for change.

Tools will keep shifting: today’s best assistant becomes tomorrow’s commodity. Departments will keep finding better workflows. Models will keep evolving.

The goal isn’t “AI in every department.” The goal is a unified intelligence layer that is governed like finance, but moves like product.

Implement MCP once, and your organisation is structurally prepared for whatever model, tool, or agent ecosystem comes next.

Exploring MCP for your enterprise? Let’s discuss what a governed, plug-and-play intelligence layer would look like across your departments.

25+ years in technology innovation. Founder and former CTO of MyFleet, a leading telematics platform. Technical Lead for GenAI at University of Newcastle. Expert in AI, Machine Learning, IoT, and Agile development.

View profileReady to scale your operations?

Let's discuss how Kipanga can architect the systems that power your next phase of growth.

Start the Discovery