AI Agents in Enterprise: Beyond the Hype Cycle

With 40% of enterprise apps embedding AI agents by end of 2026, we cut through the noise to show what's actually working - and what's still failing - in production deployments.

The hype is deafening. Every vendor promises transformation. Gartner predicts 40% of enterprise applications will embed AI agents by end of 2026 - up from less than 5% in 2025. Meanwhile, MIT Sloan warns that agentic AI is entering the "trough of disillusionment."

Both are right.

The enterprises winning with AI agents aren't deploying the most agents. They're deploying agents thoughtfully - escaping "pilot purgatory" while others chase demos that never reach production.

After 30+ enterprise deployments across financial services, logistics, healthcare, and manufacturing, we've identified what separates the 13% with agents in production from the 64% with no formalized initiative at all.

The State of Play: January 2026

Let's ground this in data. According to Recon Analytics' survey of 120,000+ enterprise respondents:

The encouraging signal? Production deployments nearly doubled in just four months - from 7.2% in August 2025 to 13.2% by December. The gap between leaders and laggards is widening fast.

Why 2026 Is Different

Three shifts make this year an inflection point:

The End of Single-Purpose Agents

"We've moved past the era of single-purpose agents," notes IBM's Chris Hay. In 2024, agents were small and specialized: the email writer, the research helper. Now we're seeing multi-agent ecosystems where specialized agents collaborate - a researcher gathers information, a coder implements, an analyst validates.

Gartner reported a 1,445% surge in multi-agent system inquiries from Q1 2024 to Q2 2025. This isn't curiosity - it's architectural planning.

Decision Velocity as the Metric

Agentic AI is increasingly being recognized as a feature, not a strategy. The real game is "decision velocity"—how quickly smaller decision trees can be automated at scale.

The Decision Velocity Framework

When Constellation Research's Michael Ni called agentic AI "merely a feature," it reframed the conversation. The goal isn't agent deployment - it's collapsing decision trees to achieve 5x-10x improvements in process speed.

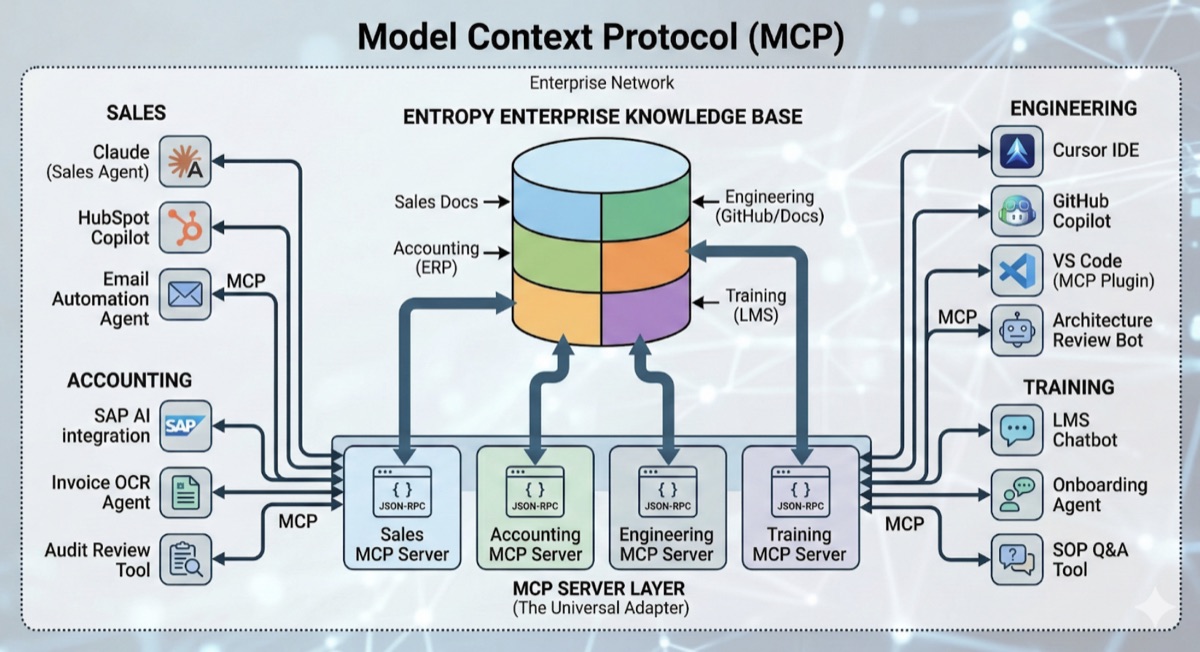

GraphRAG and Knowledge Foundations

The difference between a useful agent and a hallucinating liability is the quality of its foundation. In 2026, enterprise automation hinges on GraphRAG - retrieval-augmented generation powered by semantic knowledge backbones.

The knowledge graph acts as shared memory and coordination hub, connecting specialized agents across departments and data systems.

Where AI Agents Deliver Value

After analyzing production deployments, clear patterns emerge:

High-Confidence, Low-Stakes Decisions

G2's Enterprise AI Agents Report identifies the first wave of full autonomy:

- Routine customer inquiries (40% ticket deflection typical)

- Data entry and validation from structured sources

- Status updates and notifications triggered by system events

- Document classification and routing with clear taxonomies

These aren't glamorous use cases. They're the ones that actually ship.

Most organizations remain in 'autonomy with guardrails'—and that's exactly where they should be.

Document Processing at Scale

Enterprises drown in unstructured documents. AI agents extract, classify, validate, and route - turning weeks into hours.

Production results from a $400M financial services firm:

Monitoring and Anomaly Response

AI agents excel at continuous observation - detecting performance degradation, diagnosing likely causes, executing remediation, and escalating when intervention fails. The 60% faster incident response reported by enterprises isn't from better alerting; it's from automated first-response actions.

Where AI Agents Still Struggle

Equally critical: understanding the boundaries.

The Pilot Purgatory Problem

Nearly two-thirds of organizations remain stuck in pilot stage, unable to scale AI across the enterprise. The pattern is consistent: you can get to 80% accuracy with 20% effort - enough to close a pilot. But production demands 99%+, and that last stretch takes 100x more work.

The 80/20 Trap

Demo-ready isn't production-ready. The gap between "impressive pilot" and "reliable system" is where most initiatives die. Budget for the final 20% as if it were a separate project - because it is.

Novel Situations and Edge Cases

AI agents learn from patterns. When situations fall outside training data, behavior becomes unpredictable. One logistics provider found 22% of exceptions - those requiring negotiation, judgment, or relationship management - still require human handling. This isn't a limitation to overcome; it's a design principle to embrace.

The Trust Deficit

Trust has become the currency of AI deployment. In 2026, as regulatory frameworks mature and scrutiny sharpens, organizations need to engineer trust - not assume it. The path forward lies in structured, semantic data that machines can reason over and humans can understand.

The Implementation Framework

Successful deployments follow a consistent pattern:

Phase 1: Use Case Selection (Weeks 1-4)

Not every process benefits from agents. Evaluate candidates against:

| Criterion | Question | Weight |

|---|---|---|

| Volume | How many decisions per day? | High |

| Confidence Distribution | What % are high-confidence? | Critical |

| Data Availability | Is training data clean and accessible? | Critical |

| Error Cost | What's the impact of a wrong decision? | High |

| Human Bottleneck | Is capacity limiting throughput? | Medium |

The best initial use cases are high-volume, high-confidence, and low-stakes. Prove value here before expanding to ambiguous territory.

Phase 2: Foundation Building (Weeks 4-12)

Before building agents, build the knowledge infrastructure:

- Data cleaning and normalization—agents are only as good as their training data

- Knowledge graph construction—the semantic backbone for reasoning

- API inventory and integration planning—agents must connect to existing systems

- Governance framework design—who approves what, when, and why

Phase 3: Supervised Deployment (Weeks 12-20)

Initial deployment is fully supervised:

- Agent makes recommendations

- Human reviews and approves each action

- Disagreements logged and analyzed

- Behavior refined based on feedback

The Supervision Period

Plan for 8-12 weeks minimum. Rushing to autonomy creates risk. This period builds trust, refines behavior, and identifies edge cases that didn't appear in training data.

Phase 4: Graduated Autonomy (Weeks 20+)

As reliability is proven, autonomy expands:

Level 1: Agent acts autonomously on high-confidence decisions (typically 40-60% of volume)

Level 2: Agent acts on most decisions; humans review samples and exceptions (target for most enterprises)

Level 3: Full autonomy with exception-only human review (requires exceptional accuracy and low error costs)

The ROI Reality Check

The economics are compelling - when done right:

Quantifiable Benefits

- Labor capacity freed: Not headcount reduction, but reallocation to higher-value work

- Throughput increase: Volume handled per unit time

- Quality improvement: Consistency that humans can't maintain at scale

- Speed improvement: Minutes instead of days for routine decisions

The Hidden Costs

Most business cases underestimate:

- Model maintenance: Continuous retraining as patterns shift

- Exception handling: Human review of escalations doesn't disappear

- Integration complexity: APIs often take longer than agent development

- Change management: People need to trust and work alongside agents

The Payback Test

Target 18-month payback on agent investments. Longer timelines introduce too much uncertainty around technology evolution and organizational change.

Case Study: Logistics Exception Handling

A $600M logistics company wanted to automate shipment exception handling. Manual process: 12 FTEs reviewing exceptions, determining resolution, communicating with customers.

Phase 1 (Weeks 1-12):

- Focused on high-volume, low-complexity exceptions

- Built knowledge graph connecting customer preferences, carrier capabilities, and resolution patterns

- 40% of exceptions handled autonomously

- 94% customer satisfaction (vs 91% human baseline)

Phase 2 (Weeks 12-24):

- Extended to medium-complexity exceptions

- Added proactive customer notification

- Integrated with carrier systems for real-time updates

Current State:

The remaining 22% stay human-handled. By design.

Looking Forward: What's Coming in 2026

Based on current trajectories:

Multi-agent orchestration becomes standard. Single agents give way to coordinated teams with specialized roles and governance frameworks.

Agentic commerce emerges. Visa declared 2025 "the final year consumers shop and checkout alone." Mastercard, PayPal, and Google have launched protocols for AI agents to make purchases. Brands will need to be legible to agents, not just humans.

The talent crunch intensifies. Job postings for "AI agent" developers rose nearly 1000% between 2023 and 2024. Building internal capability - not just buying solutions - becomes a competitive advantage.

2026 is when enterprises move from pilots to production. The infrastructure is in place. The protocols exist. The question is whether your organization has the operational discipline to execute.

Exploring AI agents for your organization? Let's discuss where agents could drive measurable value in your operations.

25+ years of experience in web development and technology leadership. AWS-certified professional who has led major digital projects for brands like A2 Milk, Toll, and Uniting. Advocates a pragmatic, milestone-driven approach to technology.

View profileReady to scale your operations?

Let's discuss how Kipanga can architect the systems that power your next phase of growth.

Start the Discovery