The Real Cost of AI at Scale

Token costs dropped 280-fold in two years - yet enterprise AI bills are hitting tens of millions. Here's why the economics of AI break at production scale, and how to fix them.

The Paradox Nobody Talks About

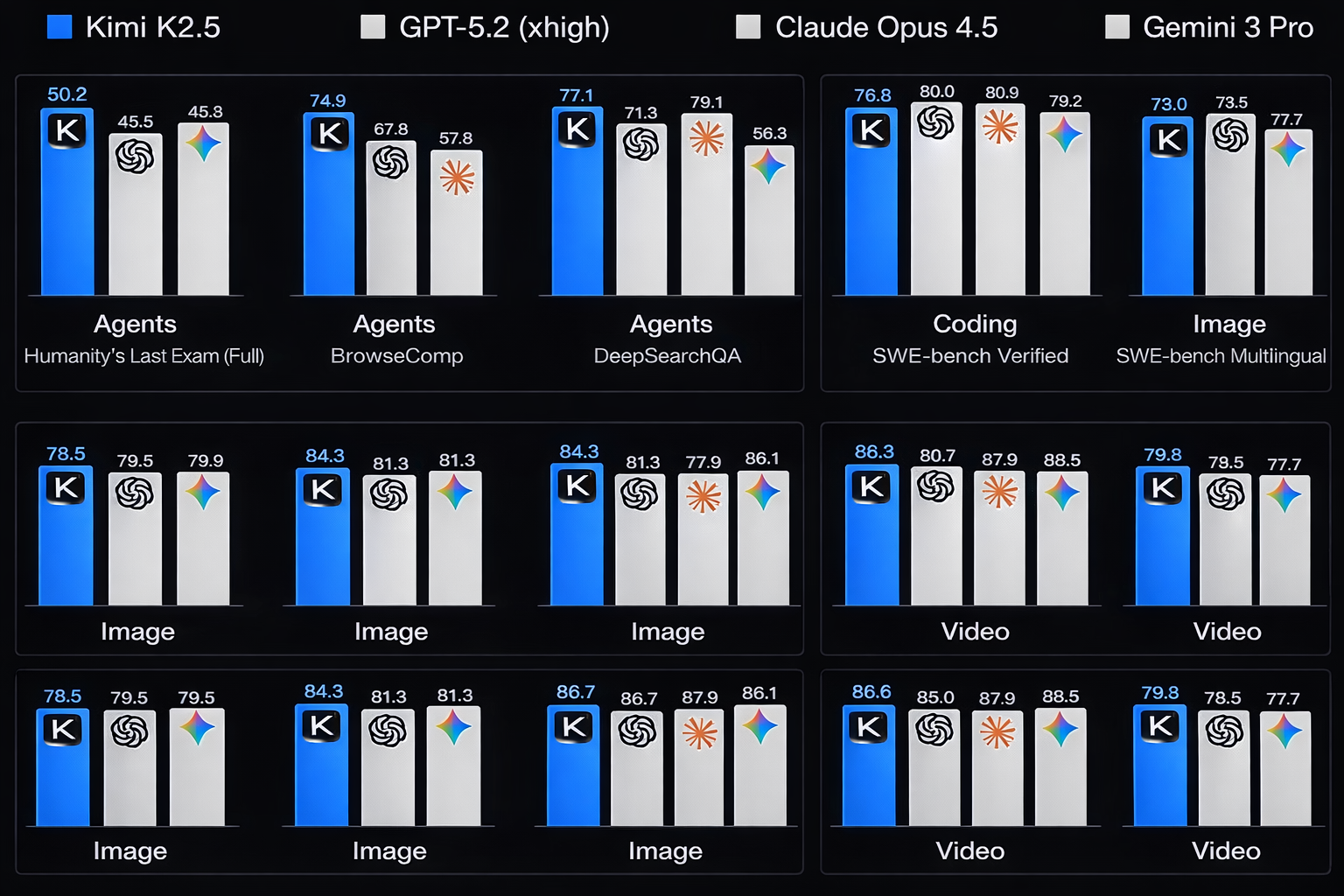

Here's a number that should make every CTO pause: token costs have fallen 280-fold since 2024. And yet, some enterprises are seeing monthly AI bills in the tens of millions.

How is that possible? Because usage exploded faster than costs declined. The pilot that cost $500 a month now runs across 40 departments, processes 10x the volume, and hits APIs that nobody budgeted for.

This is the dirty secret of enterprise AI in 2026. The technology works. The economics don't - unless you design for them.

Where the Money Actually Goes

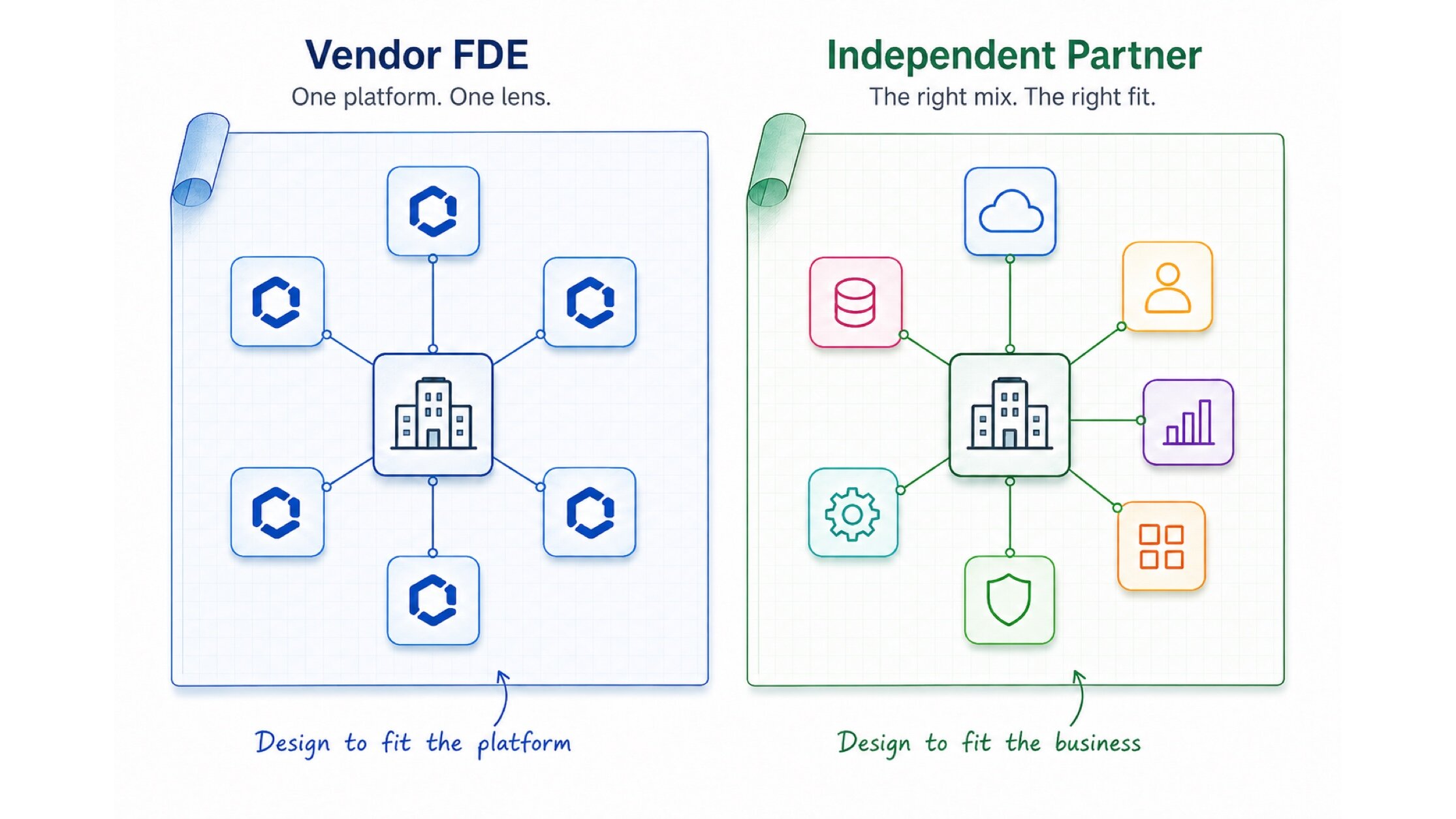

Most enterprises track the wrong costs. They obsess over model licensing and ignore the infrastructure iceberg underneath.

The real cost drivers aren't the models themselves. They're the data pipelines feeding them, the orchestration layers connecting them, the monitoring systems watching them, and the humans maintaining all of it.

Three Patterns That Break the Budget

1. The "Peanut Butter" Problem

Spreading AI thinly across dozens of use cases instead of concentrating on the three that actually move the needle. Every use case carries fixed costs - data prep, testing, monitoring, compliance review. Multiply that by 40 low-value experiments and you've built an expensive science project.

The fix: Ruthlessly prioritise. Pick the use cases where AI creates measurable business value - cost reduction, revenue generation, or risk mitigation - and go deep on those.

2. The Infrastructure Gap

Most enterprises built their infrastructure for cloud-first workloads. AI workloads are fundamentally different. They need GPU compute, vector databases, real-time inference pipelines, and caching layers that didn't exist in your 2023 architecture.

Gartner predicts that 40% of agentic AI projects will fail by 2027 - not because the technology doesn't work, but because organizations are automating broken processes on inadequate infrastructure.

The fix: Treat AI infrastructure as a platform investment, not a project expense. Build shared foundations - embedding stores, orchestration layers, evaluation frameworks - that every team can leverage.

3. The Integration Tax

The average enterprise AI deployment touches 7+ systems. Each integration is a maintenance liability, a security surface, and a point of failure. When your AI agent needs data from Salesforce, SAP, and three internal APIs to answer one question, the cost isn't the token - it's the plumbing.

The fix: Invest in a robust integration layer before scaling AI. API gateways, event-driven architectures, and standardized data contracts pay for themselves within months.

The Playbook for Sustainable AI Economics

The enterprises getting AI economics right share three traits:

They measure total cost of ownership, not just API spend. Every AI initiative gets a full cost model: compute, data, integration, monitoring, compliance, and opportunity cost.

They build platforms, not projects. Shared infrastructure means the 10th AI use case costs a fraction of the first. Reusable components - prompt libraries, evaluation suites, data connectors - compound over time.

They design for cost from day one. Model selection, caching strategies, batching patterns, and fallback logic aren't afterthoughts. They're architecture decisions made before the first line of code.

The Bottom Line

AI at scale is an infrastructure challenge disguised as a technology opportunity. The enterprises that win won't be those with the most sophisticated models - they'll be the ones who build the economic foundations to deploy AI sustainably across the organisation.

The question isn't whether you can afford AI. It's whether you can afford to scale it without a plan.

Kipanga helps enterprises build AI infrastructure that scales sustainably. From cost modeling and architecture design to platform engineering and integration strategy - we turn AI experiments into production-grade systems with predictable economics.

25+ years in technology innovation. Founder and former CTO of MyFleet, a leading telematics platform. Technical Lead for GenAI at University of Newcastle. Expert in AI, Machine Learning, IoT, and Agile development.

View profileReady to scale your operations?

Let's discuss how Kipanga can architect the systems that power your next phase of growth.

Start the Discovery