Why Your AI Strategy Should Include Open-Source Models

Open-source LLMs are closing the gap with proprietary frontier models fast - with 86% lower costs and performance parity projected by mid-2026. Enterprises that ignore them are leaving money, flexibility, and control on the table.

Most enterprises default to proprietary AI models. OpenAI, Anthropic, Google - the names are familiar, the APIs are easy, and the demos are impressive. For many use cases, that's the right call.

But if your AI strategy only considers proprietary models, you're missing half the picture. Open-source LLMs have crossed a critical threshold. They're no longer the budget alternative you settle for when you can't afford the real thing. In a growing number of scenarios, they are the smarter strategic choice.

Gartner forecasts that over 60% of businesses will adopt open-source LLMs for at least one AI application by 2026. Deloitte reports that companies using open-source models achieve 40% cost savings while maintaining comparable performance. This isn't an experiment anymore - it's a maturing enterprise strategy.

The Performance Gap Is Closing Fast

Two years ago, the gap between open-source and proprietary models was a chasm. Today, it's a crack - and it's narrowing every quarter.

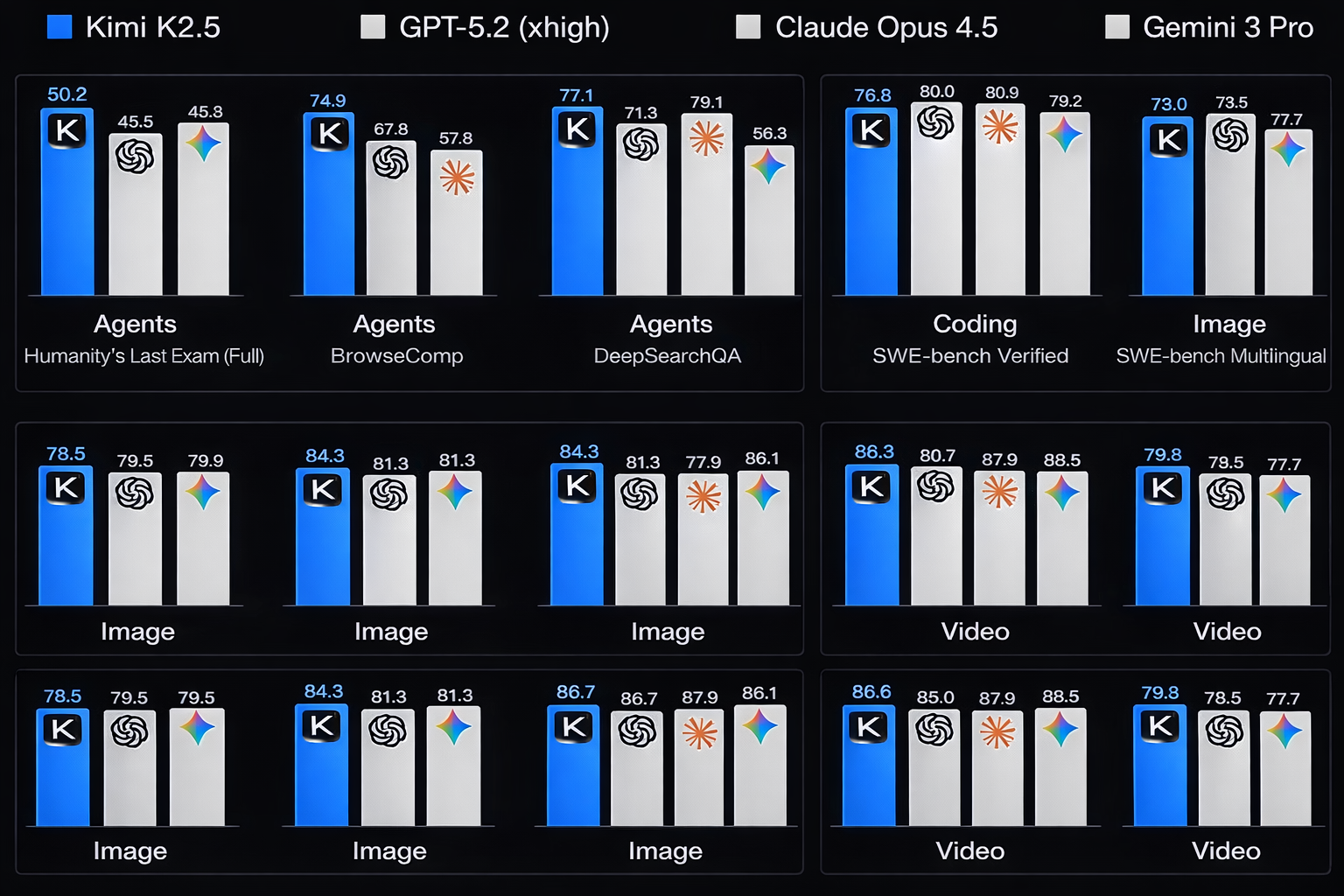

A comprehensive benchmark analysis of 94 leading LLMs found that the quality gap between the best open-source model and the top proprietary model has shrunk from 15-20 points in late 2024 to approximately 9 points today. At the current rate of improvement, analysts project performance parity by mid-2026.

The recent arrival of models like Moonshot AI's Kimi K2.5 - a trillion-parameter Mixture-of-Experts model with native multimodal support and a 256,000-token context window - illustrates the pace of change. On the Humanity's Last Exam benchmark, K2.5 scored 50.2%, edging out several proprietary peers. On SWE-Bench Verified, it hit 76.8%, within striking distance of the 80% achieved by top closed models.

These aren't cherry-picked results on niche benchmarks. Across coding, reasoning, image understanding, and agentic tasks, open-source models are competing head-to-head with the biggest names in AI.

The Benchmark Reality

Closed frontier models still lead in some areas - particularly nuanced reasoning and deep ecosystem integration. But for roughly 80% of real-world enterprise use cases, open-source models deliver comparable results at a fraction of the cost.

The Strategic Case for Open-Source

The performance story is compelling, but it's not the only reason enterprises should pay attention. The strategic advantages run deeper.

Cost Predictability at Scale

Proprietary APIs charge per token. When your AI usage is low, the bill is manageable. When it scales - and the whole point of enterprise AI is to scale - those per-token costs become significant and unpredictable.

Open-source models on self-hosted or dedicated infrastructure offer flat-cost scaling. You're paying for compute, not for tokens. For high-volume applications like customer service automation, document processing, or internal knowledge systems, the economics are dramatically different.

| Model Approach | Cost Model | At Scale |

|---|---|---|

| Proprietary API | Per-token pricing | Costs grow linearly with usage |

| Open-source (self-hosted) | Infrastructure cost | Costs plateau as utilization increases |

| Open-source (managed) | Fixed or tiered pricing | Predictable monthly spend |

Data Sovereignty and Privacy

When you use a proprietary API, your data travels through someone else's infrastructure. For many use cases, that's acceptable. For regulated industries - healthcare, finance, legal, government - it's often not.

Open-source models can be deployed entirely within your own infrastructure. Your data never leaves your environment. This isn't just a compliance checkbox - it's a fundamental requirement for organizations handling sensitive information.

The Data Control Question

Only 40% of companies provide official LLM subscriptions, yet workers from over 90% of organizations report using personal AI tools for work. If your enterprise doesn't offer a privacy-compliant AI option, your employees are likely sending company data through consumer AI services. Self-hosted open-source models solve this problem.

No Vendor Lock-In

The AI landscape shifts fast. Today's leading model might be tomorrow's commodity. When your entire AI stack depends on a single proprietary vendor, you're exposed to their pricing changes, rate limits, deprecation decisions, and strategic pivots.

Open-source models are interchangeable. If a better model launches next month - and in this market, it probably will - you can adopt it without rearchitecting your entire system. Your integration layer stays the same. Your data pipelines stay the same. Only the model changes.

Customization and Fine-Tuning

Proprietary models are general-purpose by design. They're optimized for the broadest possible range of tasks. That's powerful, but it means they're rarely the best option for your specific domain.

Open-source models can be fine-tuned on your proprietary data to become specialists in your industry, your processes, and your language. A fine-tuned smaller open-source model often outperforms a general-purpose proprietary model on domain-specific tasks - at a fraction of the inference cost.

Open-source doesn't mean "cheap alternative." It means full control over customization, deployment, data privacy, and cost structure. For enterprises with the technical capability to leverage it, that control is a competitive advantage.

When Proprietary Still Wins

This isn't a case for abandoning proprietary models. It's a case for having both in your toolkit. Proprietary models maintain clear advantages in specific scenarios:

Rapid prototyping. When you need to test an idea in hours, not days, a single API call to a frontier model is unbeatable. The ease of getting started is genuinely valuable.

Ultra-complex reasoning. For the most demanding reasoning and analysis tasks - the kind that push the boundaries of what AI can do - proprietary frontier models still have an edge. That edge is shrinking, but it exists today.

Ecosystem integration. Proprietary platforms like Salesforce's Agentforce or Microsoft's Copilot offer deep integration with their respective ecosystems. If your business already runs on these platforms, the built-in AI capabilities can be more practical than self-hosted alternatives.

Limited technical capacity. Self-hosting and fine-tuning open-source models requires ML engineering expertise. If your organization doesn't have that capability - and doesn't want to build or partner for it - proprietary APIs are the pragmatic choice.

The Hybrid Approach

The most sophisticated enterprises aren't choosing one or the other. They're running both - selecting the right model for each use case based on the actual requirements, not brand loyalty.

A practical hybrid strategy might look like this:

Proprietary for exploration and edge cases. Use frontier proprietary models for rapid prototyping, complex one-off analysis, and tasks where absolute peak performance justifies the per-token cost.

Open-source for production workloads. Deploy self-hosted open-source models for high-volume, steady-state operations where cost predictability, data privacy, and customization matter more than marginal quality differences.

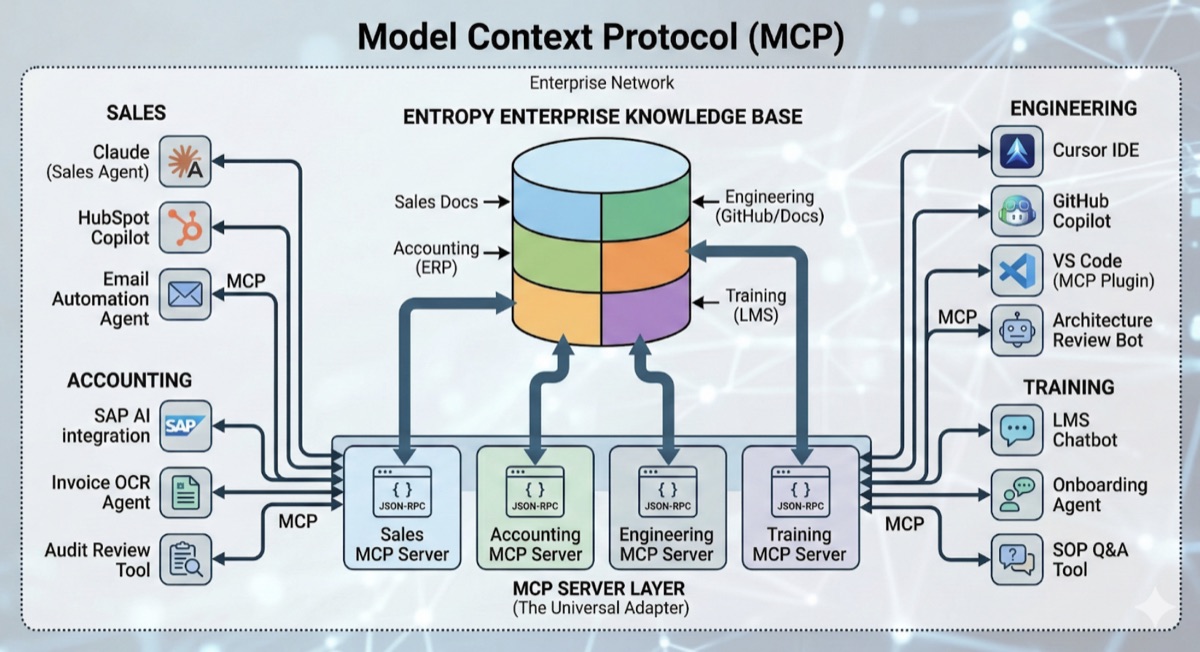

MCP for the glue. Use Model Context Protocol to decouple your knowledge base from your model layer. When your corporate data is accessible via MCP, switching between proprietary and open-source models becomes trivial. Marketing can use the latest Gemini with search capabilities, sales can use Claude for outreach, and engineering can use Cursor or Claude Code - all with secure connections to company information.

Performance drives decisions. Builders consistently choose frontier models over cheaper, faster alternatives - but 66% simply upgrade within their existing provider rather than evaluating the full landscape.

That last statistic reveals the real problem. Most enterprises aren't making active model decisions. They're defaulting to whatever they started with. A deliberate strategy that evaluates open-source options for each use case can capture significant savings without sacrificing capability.

Getting Started

If your organization hasn't evaluated open-source models yet, here's a practical path:

Identify Your High-Volume Use Cases

Which AI workloads process the most tokens? Customer service automation, document processing, internal search, content generation - these high-volume applications are where open-source delivers the biggest cost advantage.

Run a Side-by-Side Evaluation

Take your actual production prompts and run them through both proprietary and open-source models. Measure output quality against your specific success criteria - not abstract benchmarks. You may be surprised how close the results are.

Start with Managed Hosting

You don't need to build GPU infrastructure on day one. Managed open-source hosting platforms let you deploy models with minimal operational overhead while you build internal capability.

The Power of Community

Open-source AI isn't just about the models. It's about the ecosystem - the thousands of developers contributing improvements, fine-tunes, tooling, and deployment optimizations. The pace of innovation in open-source AI is driven by a global community iterating faster than any single company can. That momentum is accelerating, not slowing.

Build the Switching Capability

The most important investment isn't in any specific model - it's in the architecture that lets you switch models easily. Design your AI systems with model-agnostic integration layers. When the next breakthrough open-source model drops (and it will), you want to be able to adopt it in days, not months.

That last number - 13% of AI workloads on open-source, down slightly from 19% six months ago according to Menlo Ventures - might seem discouraging. But it reflects the convenience advantage of proprietary APIs, not a quality judgment. As enterprises mature in their AI operations and cost pressures intensify, the economic logic of open-source becomes harder to ignore.

The Bottom Line

The AI model landscape is moving faster than any technology market in history. Betting your entire strategy on a single proprietary vendor is a concentration risk. Building the capability to leverage open-source models alongside proprietary ones gives you optionality - to optimize for cost, privacy, performance, and speed as requirements evolve.

Open-source LLMs aren't a compromise. They're a strategic capability. The enterprises that build that capability now will have a meaningful advantage as the market continues to shift.

The question isn't whether open-source models are good enough. For most enterprise use cases, they already are. The question is whether your organization has the architecture and expertise to take advantage of them. Build that capability now, and you'll have options that your competitors don't.

Want to evaluate whether open-source models could reduce costs and increase control across your AI operations? Let's explore the options and design a model strategy that fits your specific requirements.

AWS Certified Cloud Expert and AI & ML Specialist with a background in Data Science from the University of British Columbia. Skilled in building data-driven solutions, business automation, and scalable AI systems.

View profileReady to scale your operations?

Let's discuss how Kipanga can architect the systems that power your next phase of growth.

Start the Discovery