What Nvidia's $70 Billion Retreat Tells Us About Where AI Is Heading

Nvidia just slashed its OpenAI commitment from $100B to $30B and signaled its Anthropic investment will be the last. The official reason is IPOs. The real signal is a fundamental reshaping of who controls the AI stack - and what that means for every enterprise building on it.

Nvidia just made a move that deserves more attention than it's getting.

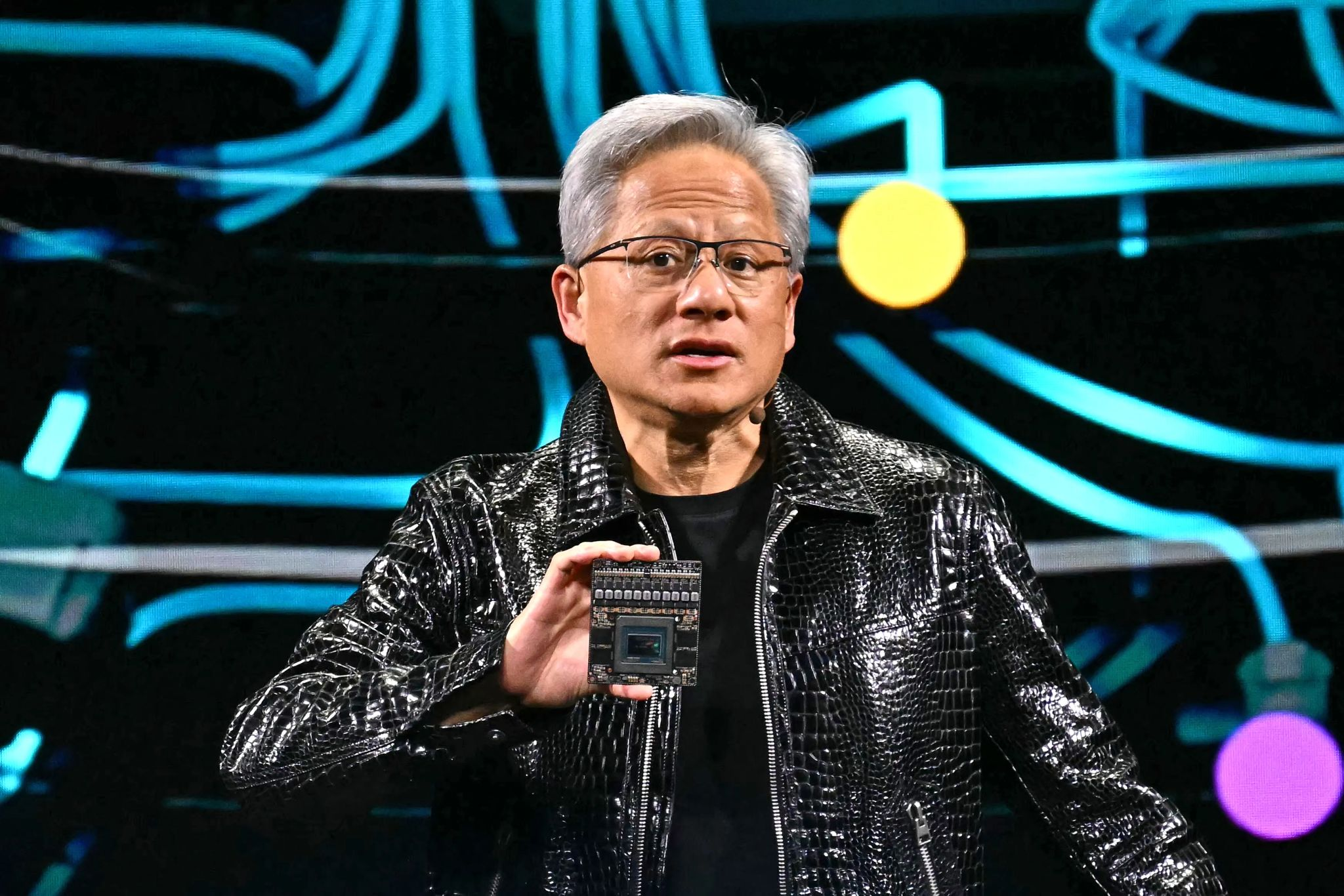

At the Morgan Stanley Tech, Media and Telecom conference on March 4, CEO Jensen Huang signaled that Nvidia's recent investments in OpenAI and Anthropic are likely its last. The company finalized a $30 billion stake in OpenAI's $110 billion round - dramatically less than the $100 billion originally pledged in September - and indicated that its $10 billion Anthropic investment from November will be the final one.

The official explanation is straightforward: both companies are expected to IPO later this year, which closes the private investment window. That's true. It's also not the whole story.

Nvidia isn't pulling back because the opportunity has ended. It's pulling back because the dynamics of the AI ecosystem are shifting - from a world where hardware suppliers and model developers are partners, to one where they're increasingly competitors. Understanding this shift matters for every enterprise building an AI strategy.

What Actually Happened

The timeline tells an interesting story.

In September 2025, Nvidia announced it would invest up to $100 billion in OpenAI as part of a massive infrastructure partnership. By February 2026, that figure had shrunk to $30 billion. Meanwhile, Nvidia invested $10 billion in Anthropic in November 2025 - and is now calling it the last.

That's a $70 billion reduction in commitment across both companies in roughly six months. IPO timing doesn't explain that.

Several factors make this more complex than a simple portfolio decision.

First, the circular nature of these deals drew scrutiny. Nvidia invests billions into AI labs. Those labs spend billions on Nvidia chips. MIT Sloan's Michael Cusumano described this arrangement as essentially a wash - raising questions about whether these investments were inflating valuations without creating genuine new value.

Second, Nvidia's relationship with Anthropic became strained. Two months after the $10 billion investment, Anthropic's CEO took the stage at Davos and - without naming Nvidia directly - compared U.S. chip companies selling processors to approved Chinese customers to providing weapons to adversaries. That's not the kind of language that strengthens a partnership.

Third, and most significantly, Nvidia is evolving from a pure hardware supplier into a full-stack AI platform company. Its AI Enterprise software suite, DGX Cloud service, and expanding consulting capabilities increasingly put it in direct competition with the companies it supplies.

The Conflict of Interest

Once OpenAI and Anthropic go public, they'll need to disclose their dependency on Nvidia infrastructure in regulatory filings. Having your primary infrastructure supplier also be an investor and an emerging competitor creates a conflict that public markets won't ignore.

The Infrastructure Layer as Strategic Position

Edward's original observation was sharp: by focusing on providing the infrastructure that powers AI systems rather than aligning deeply with any single model developer, Nvidia remains central to the ecosystem regardless of how the competitive landscape evolves.

This is a deliberate strategic choice - and one with significant implications.

The AI industry is increasingly splitting into layers: infrastructure (chips, compute, data centers), foundation models (the LLMs themselves), and applications (the products and services built on top). The companies that own the infrastructure layer tend to capture durable value regardless of which models or applications win above them.

Think of it like the cloud computing stack. AWS, Azure, and GCP don't need to bet on which SaaS application wins. They profit from all of them. Nvidia is positioning itself similarly - as the infrastructure backbone that every AI company depends on, without betting its future on any single model provider.

The most powerful position in any technology ecosystem is the one that's essential regardless of who wins. Nvidia is deliberately moving into that position for AI - and it's a signal that the model layer is becoming commoditized faster than most people realize.

The Governance Question

The backdrop to Nvidia's pullback includes something more than just business strategy. The AI industry is navigating increasingly complex questions about how advanced systems should be used - particularly in areas related to defense and national security.

Recent events have brought this into sharp focus. The tension between AI developers over government contracts, the debate about what conditions should be attached to military applications of AI, and the regulatory responses to these disagreements all signal that the conversation is expanding well beyond model capabilities.

For enterprises evaluating AI strategy, this matters in practical ways:

Vendor risk is more than financial. The companies providing your AI capabilities are making strategic choices about government relationships, ethical boundaries, and geographic markets that could affect your access to their technology. An AI provider's policy position could impact your operations with little notice.

Regulatory frameworks are still forming. The rules governing AI deployment in sensitive contexts are being written in real time. Enterprises building AI into critical workflows need architectures that can adapt to changing regulatory environments - not systems locked to a single vendor whose regulatory status might shift.

Diversification isn't just about cost. The case for using multiple AI providers and maintaining the ability to switch between them is no longer just about price optimization. It's about resilience in an ecosystem where the relationships between major players are unstable and the regulatory landscape is in flux.

Concentration Risk in AI

If your entire AI stack depends on one model provider, you're exposed not just to their pricing and technology decisions, but to their political and regulatory positioning. The events of the past few months have demonstrated how quickly the landscape can shift.

What This Means for Enterprise AI Strategy

Nvidia's pullback isn't just inside baseball for AI investors. It carries practical implications for any business building on the AI stack.

The Model Layer Is Commoditizing

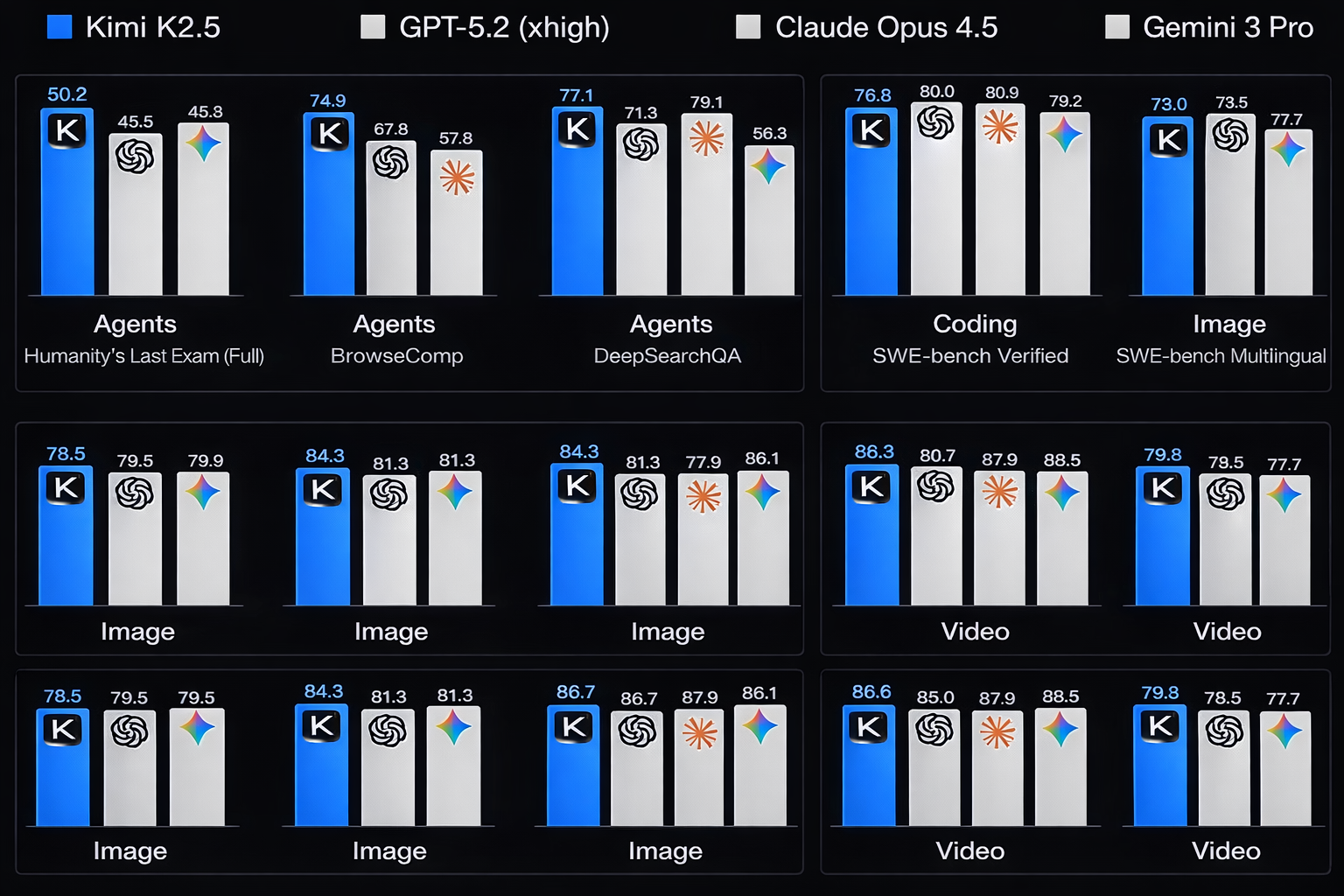

When the company that builds the underlying infrastructure starts distancing itself from model providers, it's a signal about where it sees durable value - and where it doesn't. Nvidia's move suggests that the model layer is becoming more interchangeable than the industry narrative would have you believe.

For enterprises, this reinforces a principle we've been advocating: don't build your AI strategy around a single model. Build it around your data, your processes, and your integration architecture. Models are increasingly swappable. Your business context isn't.

Infrastructure Decisions Matter More Than Model Choices

The companies that will thrive in the next phase of enterprise AI are the ones that invested in the foundational layers - data quality, knowledge architecture, integration infrastructure - rather than optimizing for any particular model's capabilities.

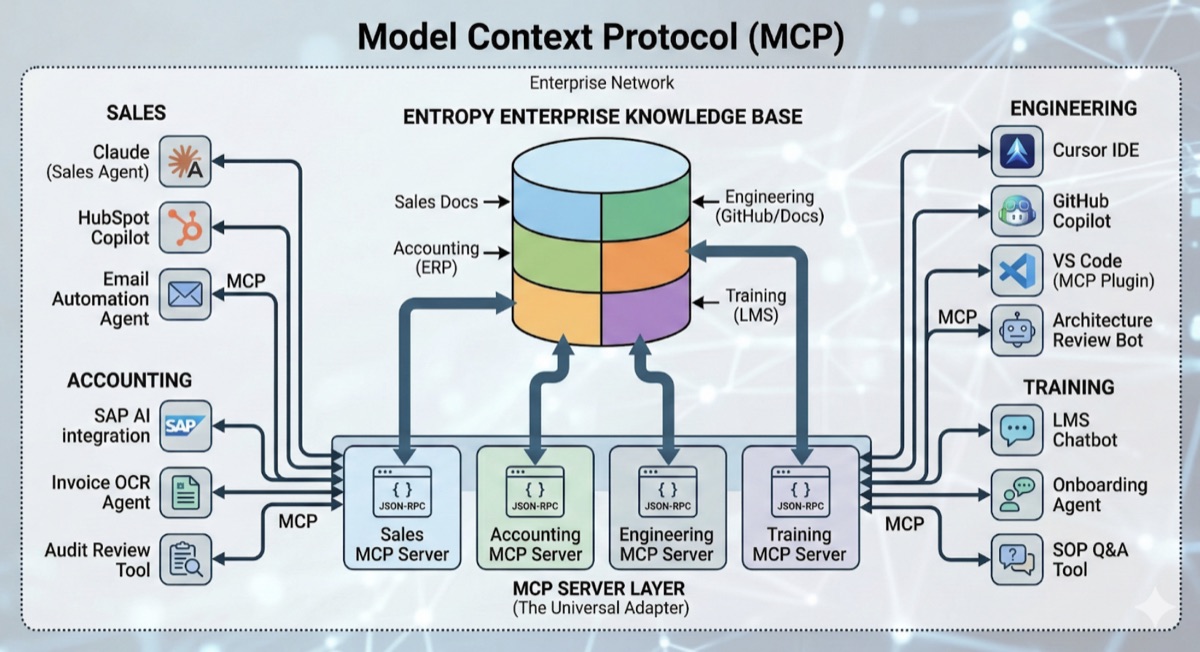

This aligns with the MCP approach we've implemented across enterprises: decouple your knowledge base from your model layer. When your corporate data is accessible through standardized protocols, switching between models becomes a configuration change rather than an architectural overhaul.

Build for Optionality

The AI ecosystem is shifting faster than most planning cycles can accommodate. The enterprises that will navigate this well are the ones with architectures designed for change - not locked into any single vendor's ecosystem.

All of our investments are focused very squarely, strategically on expanding and deepening our ecosystem reach.

When even Nvidia is reducing its commitments to specific AI companies, the message for enterprises is clear: the landscape is too dynamic for concentration bets. Invest in the layers you control - your data, your processes, your integration infrastructure - and maintain the flexibility to adopt whatever model or tool best serves your needs as the market evolves.

The Bigger Picture

Nvidia's retreat from direct AI lab investments isn't an isolated event. It's one signal among many that the AI industry is entering a new phase - one defined less by model capabilities and more by questions of access, governance, infrastructure control, and ecosystem dynamics.

The companies building AI models will continue to innovate. The competition between them will produce better, cheaper, more capable systems. That's good for everyone.

But the real strategic advantage for enterprises won't come from picking the winning model. It will come from building the organizational capability - the data foundations, the integration architecture, the governance frameworks, and the operational expertise - to leverage whichever model best serves your needs at any given moment.

The conversation is gradually expanding beyond model capabilities toward questions of access, responsibility, and oversight. Enterprises that build flexible, model-agnostic AI architectures aren't just making a technical decision - they're positioning themselves to navigate whatever the ecosystem throws at them next.

Concerned about concentration risk in your AI stack? Let's discuss how to build an architecture that gives you optionality - so your AI strategy stays resilient regardless of how the ecosystem evolves.

AWS Certified Cloud Expert and AI & ML Specialist with a background in Data Science from the University of British Columbia. Skilled in building data-driven solutions, business automation, and scalable AI systems.

View profileReady to scale your operations?

Let's discuss how Kipanga can architect the systems that power your next phase of growth.

Start the Discovery