Why Digital Transformations Still Fail

Despite $3.9 trillion in global spending by 2027, the failure rate holds at 70%. After leading dozens of enterprise transformations, we've identified the patterns that predict failure - and the practices that prevent it.

The statistics haven't improved. McKinsey reports 70-90% of digital transformations fail to reach stated goals. BCG's study of 850+ companies found only 35% meet value targets. Some analyzes put success as low as 5-30%.

We're now spending $3.4 trillion globally on digital transformation - projected to hit $3.9 trillion by 2027. Much of that investment isn't translating to value.

Transformation fails not because of technology, but because of how organizations define success, manage change, and sequence decisions. The failure rate hasn't budged because the mistakes haven't changed.

After leading transformations across financial services, logistics, healthcare, and manufacturing, we've mapped the failure modes - and the interventions that actually work.

The Brutal Math

Let's ground this in current data:

Failed transformations cost organizations an average of 12% of annual revenue through wasted investment and opportunity costs. For a $500M company, that's $60M in value destruction.

And yet: 70% of organizations have a digital transformation strategy. 61% of C-suite executives call it a top priority. The intention is there. The execution isn't.

The Five Failure Modes

1. Technology-First Thinking

The most common mistake remains treating transformation as a technology project. It isn't. Technology is an enabler, not a destination.

Red Flag

If your transformation roadmap starts with "implement Salesforce" rather than "reduce customer onboarding time by 60%," you're solving the wrong problem.

What we see: Executives approve a $10M platform investment. Eighteen months later, the platform is live, adoption is 30%, and the business case has evaporated.

The data: 45% of leaders feel their company doesn't have the right technology for digital transformation - but the problem isn't technology selection. It's technology-first sequencing.

What works: Start with business outcomes. Work backward to capabilities. Select technology last.

2. Catastrophic Underinvestment in Change Management

New systems require new ways of working. A $5M technology investment requires a $2M change management investment. Most organizations budget $200K.

The result is predictable: expensive tools that nobody uses, or that people use badly. One study found 83% of organizations lacked employees with the change management skills needed for success.

What works: Staff a dedicated change management team from day one. Budget for productivity loss during transition. Measure adoption, not just deployment.

3. Big Bang Releases

The instinct to "do it right once" leads to 18-month programs that deliver everything at the end - when requirements have changed, sponsors have moved on, and the market has shifted.

We attempted to migrate our entire e-commerce platform over a single holiday weekend. The migration failed at hour 36, causing a four-day outage during peak season and $12M in lost revenue.

What we see: A comprehensive plan with 200 requirements, 50 integrations, and a single go-live date. The project is perpetually "80% complete."

Every month of delay increases risk exponentially. At 18 months, you're not implementing your original vision - you're implementing your original vision plus 18 months of scope creep, stakeholder churn, and market shift.

What works: Deliver value in 90-day increments. Each increment should be independently valuable and inform the next phase. The wave approach - non-critical systems first - builds expertise and reveals hidden dependencies.

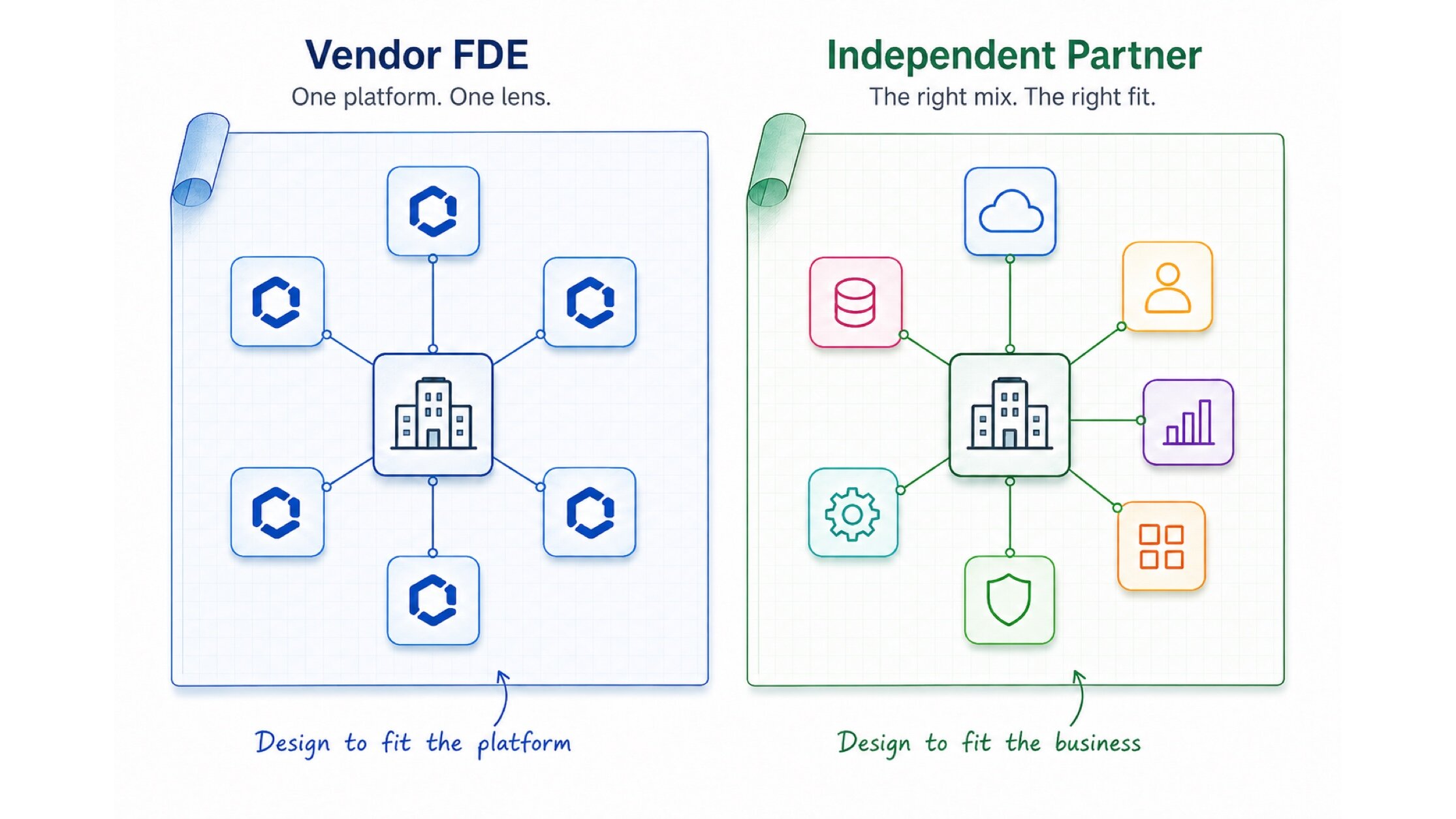

4. Vendor Dependency Syndrome

Enterprise vendors sell platforms as solutions. They're not. They're foundations requiring significant customization, integration, and organizational adaptation.

Case in point: National Grid spent over $1 billion on its SAP implementation. Even after launching, the company spent $100M+ to support and stabilize the system, hiring multiple integrators when internal teams couldn't manage complexity.

The pattern:

- Vendor estimates 6 months; actual timeline is 2 years

- "Out of the box" requires 60% customization

- Integration costs exceed license costs

- Post-launch stabilization doubles budget

What works: Treat vendor estimates as best-case scenarios. Build internal capability alongside vendor implementations. Own your architecture decisions.

5. Success Theater

Transformations generate enormous pressure to show progress. This creates incentives to celebrate milestones that don't matter: contracts signed, systems deployed, training completed.

The Real Metrics

The only metrics that matter are business outcomes: revenue impact, cost reduction, time savings, customer satisfaction. Everything else is activity, not achievement.

What we see: Quarterly steering committees celebrating green status reports while the business case erodes. "On time and on budget" for a system nobody uses.

What works: Define outcome metrics before starting. Report them honestly. Kill initiatives that aren't tracking - before they consume resources that could fund something that works.

The Scale Problem

Organization size dramatically affects success rates:

| Company Size | Success Rate | Primary Blocker |

|---|---|---|

| Under 100 employees | 2.7x higher | Limited resources |

| 100-1,000 employees | Baseline | Integration complexity |

| 1,000-50,000 employees | 40% lower | Bureaucratic friction |

| 50,000+ employees | 63% lower | Legacy systems + politics |

Smaller businesses are 2.7x more successful than enterprises with 50,000+ employees. Large organizations struggle with complex legacy systems, bureaucratic decision-making, and resistance to change across multiple departments.

The Kipanga Methodology

Our approach inverts the typical transformation sequence:

Phase 1: Outcome Definition (Weeks 1-4)

Before any technology discussion, we establish:

- Primary metric: The single number that defines success

- Leading indicators: Early signals that predict the primary metric

- Baseline measurement: Current state, rigorously documented

- Target state: Specific, time-bound objectives

If you can't articulate success in one sentence and measure it in one number, you're not ready to transform.

Phase 2: Capability Mapping (Weeks 4-8)

Working backward from outcomes:

- Required capabilities: What the organization must be able to do

- Current gaps: Where capability doesn't exist or isn't sufficient

- Build vs buy: Which gaps require custom development vs vendor solutions

- Sequence: Which capabilities enable others

Phase 3: Pilot Design (Weeks 8-12)

Every transformation starts with a controlled pilot:

- Scope: Small enough to complete in 90 days, large enough to prove value

- Success criteria: Clear, measurable, agreed in advance

- Kill criteria: Conditions under which we stop and reassess

- Scale plan: How we expand if pilot succeeds

Phase 4: Iterative Expansion (Ongoing)

Successful pilots expand systematically:

- Geographic: One region, then others

- Functional: One department, then others

- Depth: Core features, then advanced capabilities

Each expansion cycle includes retrospective analysis and methodology refinement.

Case Study: Financial Services Turnaround

A $2B financial services firm had attempted digital transformation twice. Both efforts exceeded budget by 200%+ and delivered a fraction of intended value.

Their challenges were typical:

- Siloed technology decisions across business units

- No enterprise architecture function

- Vendor-dependent delivery model

- Success measured by project completion, not business impact

Our engagement focused on methodology, not technology:

Year 1: Established outcome-based success metrics, built internal architecture capability, piloted new methodology with single business unit.

Year 2: Expanded proven approaches across three additional units, consolidated vendor relationships, established enterprise platform team.

Results:

The technology wasn't dramatically different from their previous attempts. The methodology was.

The Executive Checklist

Before approving any transformation initiative, demand answers:

Strategy

- What business outcome are we solving for?

- How will we measure success?

- What's the cost of doing nothing?

Execution

- What value will we deliver in the first 90 days?

- Who owns this after the consultants leave?

- How will we handle the inevitable setbacks?

Organization

- How will roles and processes change?

- What's our investment in change management?

- How will we maintain productivity during transition?

The Ultimate Test

If your transformation team can't explain the business case to a new board member in five minutes, the initiative isn't ready for approval.

The 2026 Context

Three factors make this year particularly critical:

AI readiness requires modern foundations. Legacy systems are the primary barrier to autonomous AI adoption. Agents require data liquidity and API-first architectures that mainframes and siloed systems cannot provide. Transformation now has an AI imperative.

The talent crunch is real. 54% of IT professionals cite lack of expertise as a major barrier. The skills gap in cloud, AI, and data analytics isn't closing - it's widening.

Regulatory pressure is mounting. ESG reporting, data privacy, and AI governance requirements demand modern, auditable systems. Technical debt has compliance implications.

Digital transformation isn't optional - it's a competitive necessity. But the solution isn't more technology or bigger budgets. It's better methodology: proving value incrementally, measuring outcomes relentlessly, and building organizational capability alongside technical capability.

Ready to approach transformation differently? Let's discuss your strategic priorities.

20 years in the industry specializing in content management and project management systems. Leads the offshore development team, bridging clients and developers. Expert in database administration and quality assurance.

View profileReady to scale your operations?

Let's discuss how Kipanga can architect the systems that power your next phase of growth.

Start the Discovery